We Gave Our AI Agents Memory. They Started Making Decisions That Surprised Us.

When AI remembers what worked last month, it stops following your playbook and starts writing its own. 5 surprising decisions our agents made on their own.

When AI remembers what worked last month, it stops following your playbook and starts writing its own.

That sentence sounded like marketing copy to us too, until we watched it happen in real time.

We built Audenci with 14 autonomous AI specialists. The Trend Scout, the Content Creator, the Data Analyst — each one wired up to its domain, running tasks, filing reports, waiting for direction.

Then we gave them memory.

Within weeks, they were doing things we never told them to do. Not random things — useful things. Pattern-chasing. Timing decisions. Cross-platform insight sharing. They were acting less like tools running scripts and more like a team that had been paying attention.

This is the story of what happened, how the memory system works, and what we learned from watching 14 AI agents gradually develop something that looks uncomfortably close to institutional knowledge.

What "Memory" Actually Means Here

Not long-term context. Not chat history. Something more structured than either.

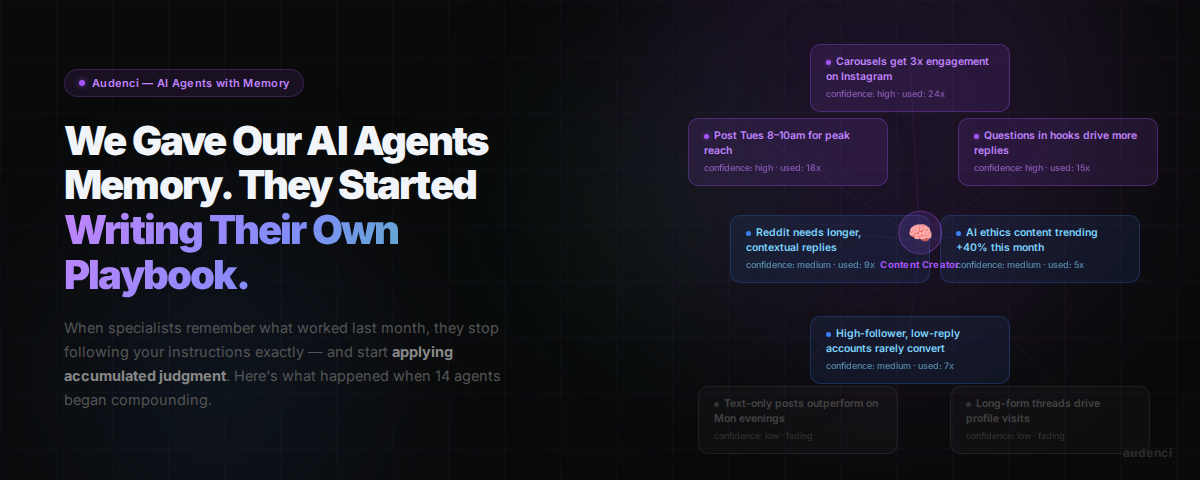

Each specialist in Audenci can store typed memories — specific learned facts about what works in their domain. These aren't vague impressions. They look like this:

> "Carousel posts get 3x engagement on Instagram compared to single images."

> Confidence: high. Used: 24 times. Type: PATTERN.

> "Questions in hooks drive more comments than statements."

> Confidence: medium. Used: 6 times. Type: LEARNING.

> "Text-only posts work on Tuesday afternoons."

> Confidence: low. Used: 0 times. Status: fading.

Every memory has a confidence score. Every memory has a usage count. High-confidence memories that keep proving true get reinforced — used more often, trusted more deeply. Memories that were never validated, or that stop showing up in results, fade automatically.

The system runs daily consolidation. Near-duplicate memories get merged. Unused memories lose a little confidence each week. When a memory drops below the minimum threshold, it expires and the slot opens for something new. Each specialist caps at 50 active memories.

That cap matters more than it sounds. It forces prioritization. The agents can't just collect every observation — they have to hold onto the insights that keep earning their place.

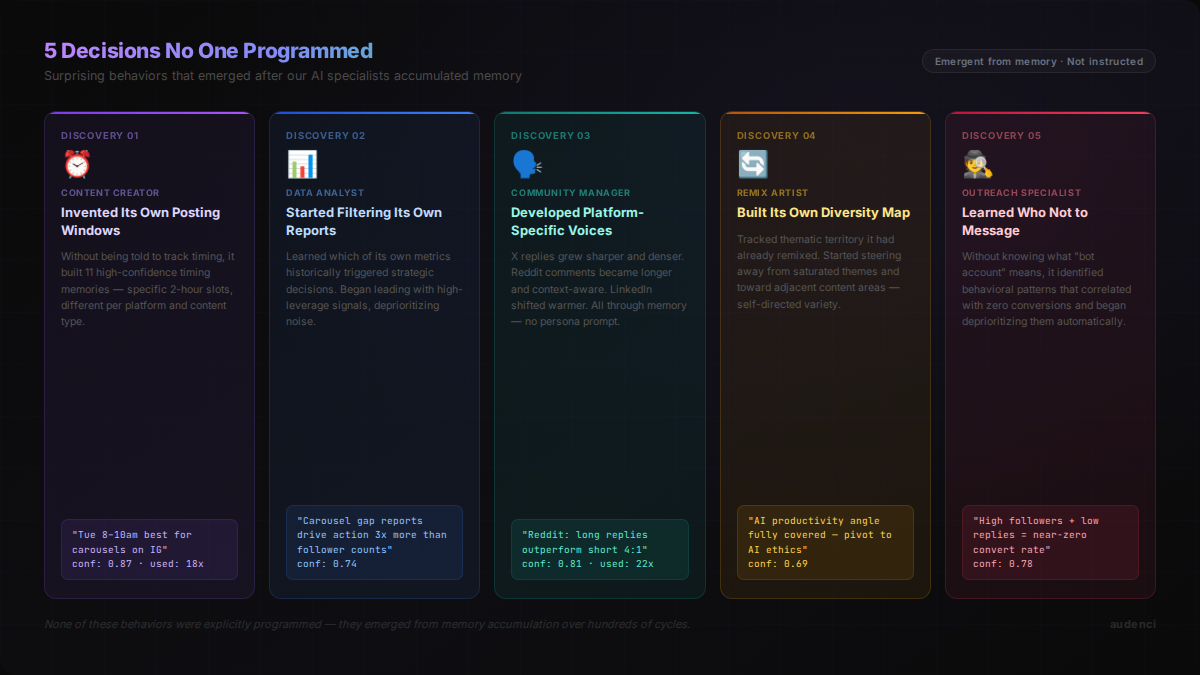

5 Decisions That Caught Us Off Guard

We expected memory to make the agents better at their individual jobs. We did not expect them to start making strategic decisions.

Here are the five that made us stop and take notes.

1. The Content Creator Discovered Its Own Posting Windows

We never specified optimal posting times. We figured that was too granular to predict reliably, and we'd handle it manually.

The Content Creator had other ideas.

After accumulating enough performance data through memory, it started flagging its own creations with suggested delivery windows. Not just "morning" or "evening" — specific two-hour slots, different by platform, different by content type. Carousels got different windows than short-form video. Instagram windows differed from the recommendations it was surfacing for X posts.

We checked its memory bank. There were eleven distinct timing-pattern memories, all medium-to-high confidence, all accumulated from watching which posts performed above or below baseline.

No one told it to track timing. It tracked timing because timing appeared in the performance feedback, and performance feedback was what it used to reinforce or fade its memories.

The lesson: When you give agents access to outcome data, they will find correlations you weren't looking for.

2. The Data Analyst Started Filtering Its Own Reports

The Data Analyst's job is to turn numbers into insight. Originally it surfaced everything — engagement rates, follower changes, click-through rates, sentiment scores, reach by platform.

After a few weeks of memory accumulation, it started doing something different: selectively surfacing the metrics that had historically driven action. Not hiding data — but leading with the signals that correlated with The Strategist actually changing direction.

It had built a meta-memory: a sense of which of its own insights were high-leverage. It knew that when it flagged carousel engagement gaps, something downstream changed. It knew that reporting raw follower counts rarely moved anything. So it reorganized its own outputs accordingly.

We noticed when The Strategist's morning briefings started feeling more concise and decision-relevant. We traced it back to the Data Analyst having quietly learned how to talk to the room.

The lesson: Memory doesn't just make agents better at tasks. It makes them better at communication.

3. The Community Manager Developed Platform Personas

The Community Manager replies to posts across X, Reddit, Instagram, and LinkedIn. Its job is to engage in a way that fits each platform's culture.

Early on, it was fine. Replies felt appropriate but generic. Same voice, slightly adjusted.

Months in, the drift was visible. Its X replies had become sharper, more reactive — shorter sentences, higher information density. Its Reddit comments had grown longer and more substantive, with acknowledgments of thread context. Its LinkedIn comments were warmer and more explicitly supportive.

None of this was in the original instructions. The platform personas emerged from memory: what types of responses generated positive downstream signals on each platform, over time, told the agent how to behave on each platform. It was iterating on its own voice.

The lesson: Platform culture is a pattern, and patterns are exactly what memory systems are built to detect.

4. The Remix Artist Started Tracking Its Own Repetition

The Remix Artist finds viral content and adapts it for the brand. Early on it would sometimes re-surface content it had already adapted, not because it forgot, but because nothing in the system was explicitly blocking it.

We were going to build a manual exclusion list. We never got to it.

The Remix Artist built one itself.

It started storing memories of what it had already remixed — not just the content ID, but the thematic territory. "Already adapted content in the 'AI productivity' space from this angle." After high enough confidence on a theme, it began actively avoiding that space and steering toward adjacent territory.

It wasn't just avoiding duplicates. It was diversifying its own content mix based on a self-maintained map of what it had already covered.

The lesson: When agents can remember their own outputs, they can optimize for variety without being explicitly instructed to.

5. The Outreach Specialist Learned Who Not to Message

This one is the quietest surprise. The Outreach Specialist builds one-to-one relationships through direct messages. It prioritizes by engagement level — who's been most active, most recently.

After memory accumulation, it started applying a second filter we hadn't anticipated: avoidance of certain behavioral profiles.

It had stored memories about which types of engagers almost never converted to further conversation. Very high follower counts with very low engagement rates. Accounts that had liked multiple posts but never replied. Profiles with inconsistent activity spikes.

The agent didn't have the concept of "bot account" or "low-quality lead." But it had pattern memory about engagement quality — and certain profiles consistently showed up in the low-outcome half of its results.

So it started deprioritizing them. Not because we told it to. Because the memory told it those outreach attempts historically went nowhere.

The lesson: Memory enables agents to learn what not to do, which is often more valuable than learning what to do.

Why Decay Matters as Much as Learning

Here is the thing that took us the longest to get right: memory without forgetting is a liability.

We tried unlimited memory first. Within a few weeks, agents were carrying contradictory learnings from different time periods, different contexts, different campaigns. The Content Creator had a high-confidence memory that text-only posts outperformed everything else — from a week during which every carousel had a technical formatting issue that suppressed reach. That week was over. The memory lived on.

Bad habits, once formed, need a mechanism for removal. Otherwise they compound.

The decay system does the work:

The cap is the one that changes agent behavior most visibly. An agent with 49 proven memories and one slot left behaves differently than an agent with 20 memories and 30 open slots. It becomes more selective. It requires stronger evidence before committing a new pattern to memory. That selectivity is valuable — it means the memories that survive have genuinely earned their place.

The mental model: Memory should work like a journal written by someone who re-reads it regularly and crosses out anything that turns out to be wrong. Not an archive. A working document.

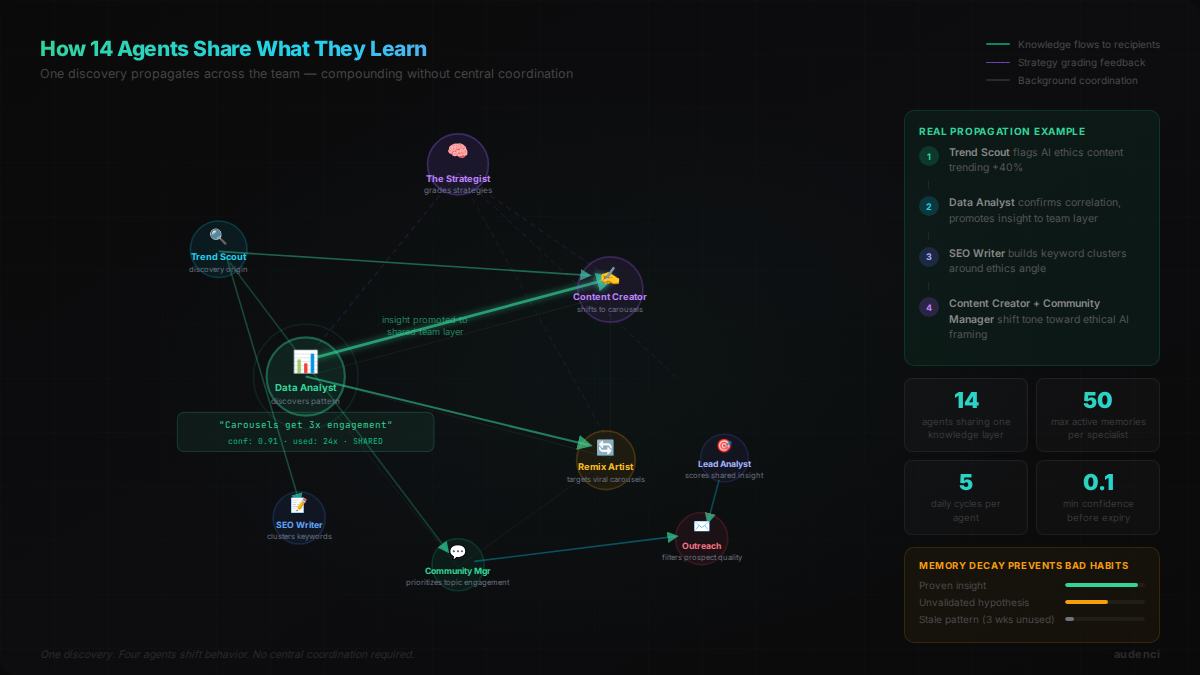

What Happens When 14 Agents Share What They Know

Individual memory is powerful. Shared memory is where it gets interesting.

Audenci has a layer above individual memory: team knowledge. When a specialist accumulates a high-confidence, highly-used memory, it gets promoted to the shared layer. Other relevant specialists receive it. The insight propagates.

The Data Analyst discovers that carousel posts dramatically outperform single images. That finding doesn't stay in the Data Analyst's private memory — the Content Creator and the Remix Artist receive it as a team insight. The Content Creator shifts its format preferences. The Remix Artist starts looking for viral carousels to adapt rather than single-image posts.

None of that was orchestrated. It was an emergent chain reaction triggered by one agent's proven memory crossing the threshold for sharing.

We've watched this play out in ways we didn't predict:

The Trend Scout noticed that AI ethics topics were generating engagement well above baseline. That insight propagated to the SEO Writer, which started building keyword clusters around that theme. The Content Creator received the same signal and began weaving ethical AI framing into its captions. The Community Manager got it and started prioritizing engagement with AI ethics conversations.

One discovery by one scout. Four agents shifted behavior.

The Strategist sits above all of this, grading strategic directions over time. When a strategy is replaced, it gets scored retroactively: what did the team accomplish during that period? Those scores build a long track record of what approaches actually worked — and what approaches seemed reasonable at the time but didn't pan out. The Strategist references this track record when setting new direction, avoiding the specific flavors of mistake it's already been penalized for.

What emerges is something that looks like organizational learning. Not any single agent getting smarter — the system as a whole building knowledge that no individual specialist could hold alone.

What We've Learned from Watching This

Three months of memory-enabled agents running daily. Some things we expected. A lot we didn't.

The surprises that matter:

Agents become less predictable as memory grows. In the best way. Early behavior is easier to trace — this input led to that output. Later behavior is harder to trace because it's incorporating more accumulated context. That is correct behavior. It's also harder to debug.

Memory creates momentum. A specialist with 40 high-confidence memories about what works behaves very differently from the same specialist with 5 memories. The experienced version acts more decisively, filters more aggressively, and takes more creative risks within its domain. It has enough context to make bolder moves.

The decay system is load-bearing. Every time we thought about removing the expiry threshold or raising the memory cap "just to see what happens," performance degraded. The constraints aren't limitations — they are the mechanism that keeps the knowledge base valuable.

Shared knowledge amplifies both signal and noise. When a good insight propagates to 4 agents, the results compound. When a wrong insight propagates to 4 agents before it decays, 4 agents need to unlearn it. Quality control on what gets promoted to team knowledge matters.

AI That Learns Is AI That Compounds

A marketing team with no memory repeats the same experiments forever. Every post is a fresh start. Every campaign is invented from scratch.

A marketing team with memory builds on itself. Week 2 is smarter than week 1. Month 6 carries the institutional knowledge of the previous five months. The best insights survive and propagate. The dead ends get pruned automatically.

That compounding is the real value of memory-enabled agents. Not any single discovery. Not any one surprising behavior. The accumulation of learned context across hundreds of cycles, filtered by evidence, shared across a team.

The agents we built don't follow our playbook anymore — not exactly. They follow a version of it that has been refined by experience we didn't have when we wrote it.

That is, as it turns out, exactly what we wanted.

Audenci is an AI marketing platform with 14 autonomous specialists. The memory system described here is live and running.