We Tracked Every Marketing Action to Revenue. Here's What Actually Drives Growth.

Most marketers measure likes. We measure the chain from trend to conversion. Here's what attribution tracking reveals that dashboards don't.

Most marketers measure likes. We measure the chain from trend to conversion.

That distinction sounds small. It isn't.

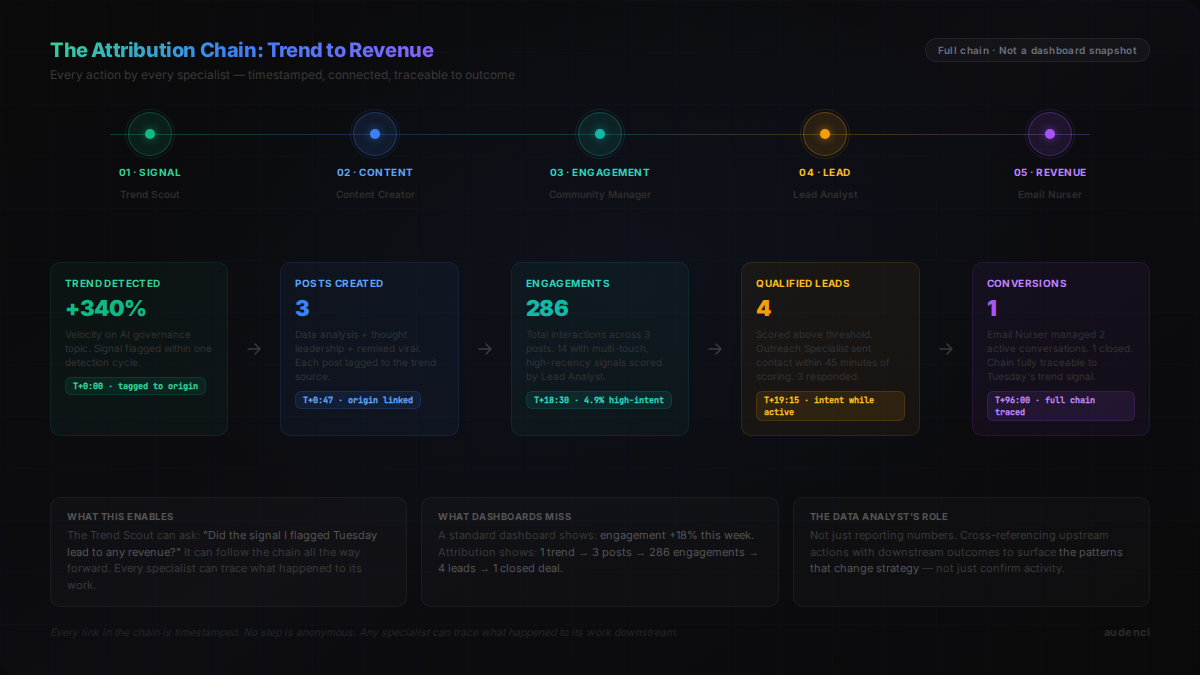

When you measure likes, you optimize for likes. When you measure the full chain — trend spotted, post created, engagement generated, lead identified, email sent, deal closed — you optimize for something that actually matters: revenue.

We built Audenci around that chain. Every action taken by every specialist connects, with timestamps, to the actions that came before it and after it. This is what we learned when we finally had enough data to look back.

Why Vanity Metrics Are a Trap (Not Just Misleading)

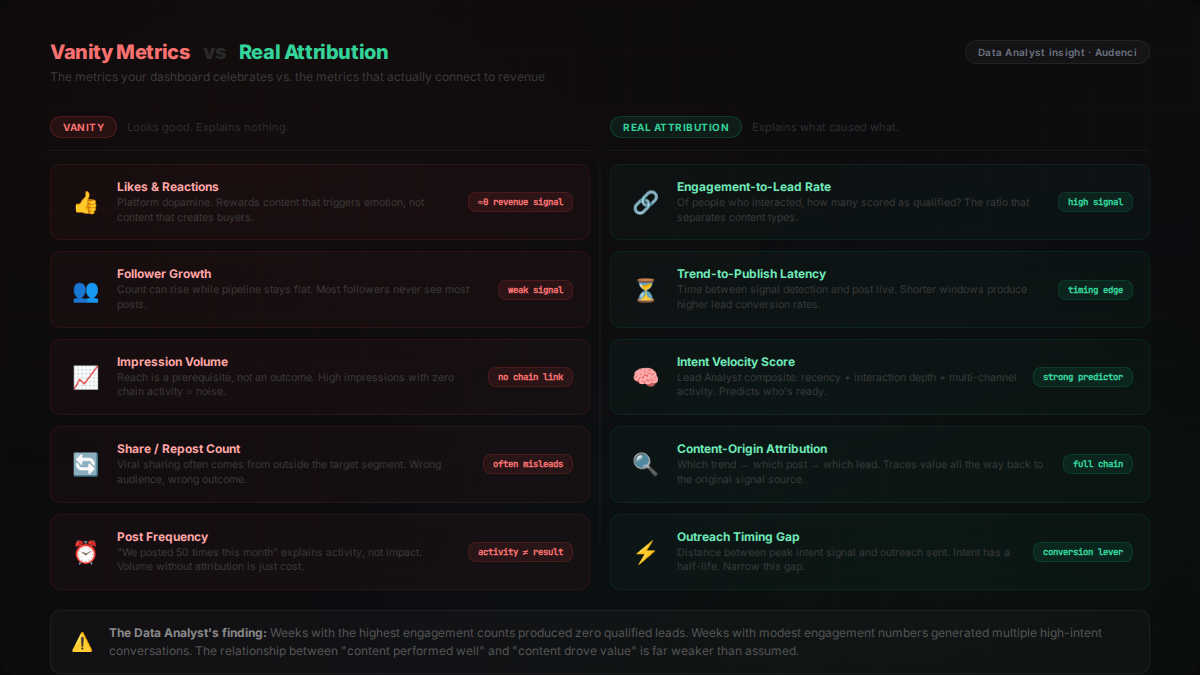

Here's the honest version of what most marketing dashboards show you: activity.

Posts published: 47. Impressions: 82,400. Likes: 1,203. Followers gained: 214.

All of that can be going up while leads are flatlining. All of it can look great while your conversion rate quietly deteriorates. The numbers feel like signal. They're mostly noise.

The real problem isn't that vanity metrics are useless — it's that they're comfortable. They reward consistent output. They punish inconsistency. They're easy to present in a meeting. So teams optimize for them without realizing they've quietly decoupled their marketing activity from actual business outcomes.

We saw this pattern in our own data repeatedly. Weeks with high engagement counts produced zero qualified leads. Weeks with modest engagement numbers generated multiple high-intent conversations. The relationship between "the content performed well" and "the content drove value" turned out to be far weaker than anyone assumed.

The Data Analyst was the one who surfaced this pattern first. Its job is not to report numbers — it's to explain why those numbers behave the way they do. When it started cross-referencing engagement data with downstream lead outcomes, the picture it assembled was uncomfortable. High-performing content in engagement terms was often low-performing content in lead terms.

The uncomfortable conclusion: if you've been measuring content performance by engagement rate, you may have been optimizing against your own business.

The Attribution Chain: What We Measure Instead

We replaced vanity metrics with something more demanding: a cause-and-effect chain with every step recorded.

It looks like this:

Trend discovered → Content created → Post published → Engagement generated → Lead identified → Outreach sent → Email sequence triggered → Conversion tracked

Every link in that chain has a timestamp. Every specialist that touched a step has a record. Nothing is anonymous.

This matters for a specific reason: it makes attribution tractable. Not "we posted 50 times this month." Not "engagement is up 18%." Instead: this trend, found at this time, produced these posts, which generated this engagement, which led to this many qualified leads.

The Trend Scout finds a signal worth acting on. That signal travels downstream. The Content Creator receives a directive tied to that trend. The post goes out tagged with its origin. Engagement gets captured against that tag. The Lead Analyst evaluates which engaged accounts meet qualified criteria. The Outreach Specialist sends first contact. The Email Nurser picks up the thread.

At any point in the chain, any specialist can trace what happened to its work. The Trend Scout can ask: did the AI governance trend I flagged last Tuesday produce anything? It can follow the chain forward. It learns whether its signals are generating downstream value — or just creating noise.

This is what real attribution looks like. Not a dashboard that aggregates activity. A chain that connects effort to outcome.

The Engagement-to-Lead Gap: The Number That Changed Everything

We expected engagement and lead generation to correlate reasonably well. They don't.

The gap between content that generates engagement and content that generates leads turns out to be large, consistent, and almost entirely invisible if you're only watching engagement metrics.

Here's the pattern we found across content types:

Short provocative takes — highest engagement, lowest lead conversion. People react, like, share. Almost no one clicks through or enters a funnel. The content is optimized for platform approval, not buying intent.

Data-driven analysis pieces — moderate engagement, highest lead conversion. Fewer likes. Far more profile visits, link clicks, DM initiations. The people who engage with analytical content are evaluating whether they want to go deeper.

How-to and process content — middle ground on both dimensions. Engagement comes from people who find it useful. Leads come from people who recognize the problem being solved is one they have.

Opinion and thought leadership — highly variable. When the opinion lands, it generates both engagement and leads. When it misses the audience's frame, it generates engagement from outside the target segment and converts nothing.

The Lead Analyst is what allowed us to see this. Its job is to evaluate engagement with a specific lens: not "how many people interacted?" but "of the people who interacted, how many show signals of buying intent?" It weights recency, interaction depth, multi-channel activity, and behavioral patterns.

When it started scoring leads against content origin, the picture became clear. The content types your engagement dashboard celebrates are often not the content types your lead pipeline rewards.

Timing Matters More Than You Think — But Not How You Think

Posting at the right time increases reach. That part is well known and genuinely true. But we found something more interesting than optimal posting windows.

The timing gap between a trend appearing and a post going out is a predictor of performance that nobody tracks.

When the Trend Scout flags a signal and the Content Creator publishes within a short window — the content reaches an audience already in the conversation. It doesn't just perform better on reach metrics. It performs significantly better on lead metrics too. The people engaging with early trend coverage are more engaged, more informed, and more likely to be the kind of buyer who follows a conversation to its conclusion.

Content published after a trend has peaked performs more like content in a saturated space: good engagement from people who want to participate in something familiar, weak lead conversion because those people have already formed opinions.

The second timing pattern: day of week matters for lead conversion in ways that differ from day of week for engagement. Our data showed meaningful divergence between "when content gets the most likes" and "when content produces the most qualified conversations." The peaks don't align.

The Data Analyst surfaced this by correlating post timing with lead scoring timestamps downstream. It's the kind of finding you can't reach by looking at either metric in isolation. It required tracing the chain.

What Attribution Tracking Reveals That Dashboards Don't

A standard marketing dashboard is a snapshot. It shows you the current state of several numbers. It doesn't show you how those numbers relate to each other over time.

Attribution tracking is a story. It shows you what caused what, in sequence, with timestamps.

The difference in what you can learn from each is not incremental. It's categorical.

From a dashboard, you learn: engagement is up this week. From attribution, you learn: the three posts published Tuesday on the AI governance trend produced 14 qualified engagements, four of which converted to email conversations, two of which are in active outreach follow-up.

From a dashboard, you learn: this content type gets more likes. From attribution, you learn: this content type gets more likes but fewer leads, while this other content type gets fewer likes and four times the lead conversion rate.

From a dashboard, you learn: our follower count grew by 300 this month. From attribution, you learn: 280 of those followers came from one thread, 12 of them became leads, and two became customers — and that thread was a remix of a viral post spotted by the Trend Scout three days before the spike.

The Trend Scout can check: "That AI ethics trend I flagged on the 14th — did it lead to posts? Did those generate engagement? Did that engagement produce leads?"

The answer is traceable. Every step of the chain is logged. The specialist that spotted the original signal can follow it all the way to a conversion and know whether its judgment was commercially valuable.

Three Things That Surprised Us

1. Most content produces exactly zero leads

This one is hard to write but important to say. When we traced every piece of content back through the chain, the distribution was stark. A small proportion of content was responsible for nearly all the lead generation. The rest generated engagement — sometimes substantial engagement — that produced nothing downstream.

The implication isn't "post less." It's "understand which content types and topic angles actually close the loop, and weight your output accordingly." You need volume to generate the distribution. But you should know what's doing the work.

2. Response speed in community engagement is a lead signal

The Community Manager replies to posts across platforms. We expected this to drive brand awareness and relationship building — a long-cycle return.

What we didn't expect: how often community engagement was the initial touchpoint for accounts that later converted. When the Community Manager replied to someone's post, and that person then visited the brand profile, and then engaged with a content piece, and then appeared in the lead pipeline — that chain was traceable.

Early, well-timed replies to relevant conversations were functioning as top-of-funnel touchpoints that our content-centric view of attribution had been missing entirely.

3. The gap between lead scoring and outreach timing is often the problem

The Lead Analyst scores accounts by engagement velocity, recency, and intent signals. The Outreach Specialist sends first contact. The gap between when an account scores high on intent and when outreach lands is measurable.

When we narrowed that gap — when the Outreach Specialist reached high-intent accounts while their intent signal was still active — conversion rates from outreach to conversation were significantly higher. Intent has a half-life. A lead that was ready to talk three days ago may have already made a decision.

What This Changes About How You Should Think About Marketing

If the attribution chain holds up — and we believe it does — then the implications for how you allocate effort and attention are significant.

You should care about post frequency less and about topic quality more. Volume matters for distribution, but only the right topics actually close the loop.

You should track community engagement as attribution, not just relationship-building. The Community Manager's replies are first touchpoints. They belong in the funnel model.

You should measure outreach timing as a performance variable, not just outreach quality. A well-written message sent to a lead whose intent signal has faded is less valuable than a decent message sent at peak intent.

You should instrument the full chain before you optimize any piece of it. Optimizing engagement without knowing which engagement leads to leads is how you end up with a beautiful dashboard and a flat pipeline.

What Audenci Does With This

We built the attribution chain into Audenci from the start. Every specialist's work is connected in sequence. The Trend Scout's signals trace forward to posts. Posts trace forward to engagement. Engagement traces forward to leads. Leads trace forward to outreach. Outreach traces forward to outcomes.

The Data Analyst doesn't just report — it explains. It looks for the correlations between upstream actions and downstream outcomes, and it surfaces the patterns that change how the team operates. When it finds that a particular content type has a high engagement-to-lead conversion rate, that signal propagates. The Strategist weighs it. The Content Creator receives updated direction. The cadence shifts.

The result is a marketing team that isn't optimizing for likes — it's optimizing for the chain that leads to revenue. Five cycles per day, every specialist connected, every action attributed.

That's not how most marketing teams operate. It's how they should.

Audenci tracks the full chain from trend to conversion automatically. 14 AI specialists, every action connected, every outcome attributed.